Introduction

A regional marketing director at a consumer goods company ran a high-priority outreach campaign targeting 8,000 accounts identified in their CRM as active, high-value customers. Two weeks later, after spending a significant budget, results came in: 1200 emails bounced, and over 200 contacts had changed roles. The targeting model had performed well, but on data that was quietly broken.

This is not just one case. Today, it is the operational reality for most industries, such as BFSI, CPG, retail, hospitality, etc.

Data teams are not failing because of a lack of talent or technology. They are failing because the large volume, velocity, and fragmentation of enterprise data have outpaced every manual process designed to govern it.

How Are Enterprises Solving Data Quality Issues In 2026?

The word ‘agentic’ is used loosely across industries, but it is worth being precise.

An agentic system perceives its environment, forms a goal, and takes a sequence of actions to achieve that goal without requiring a human to specify each step.

Applied to the agentic data management and governance context, this means that a system does not wait to be asked whether a data pipeline is healthy. It:

- monitors the pipeline continuously

- detect anomalies

- traces the failure back to its origin

- assesses downstream impact

- initiates a fix before business gets affected

So, you may ask: Is an agentic AI system different from automation?

Yes, an agentic AI system for enterprises is categorically different from automation. Automation follows a fixed set of instructions to accomplish tasks and does not make decisions when unexpected issues arise. Agentic AI for data management systems, however, reasons about the current situation, sets its own goals, and determines which actions to take next without relying on detailed human instructions for each step

This distinction matters because enterprise data environments are not static. Schemas change, business definitions drift, and new data sources are added without updating downstream dependencies. Any system that depends on pre-written rules will eventually produce wrong outputs silently and often does.

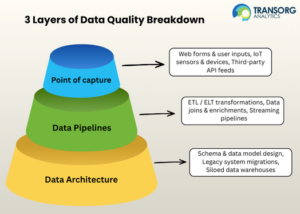

Three Layers Where Enterprise Data Actually Breaks

Most data quality frameworks treat data as a single problem. In practice, breakdowns happen across three structurally different layers:

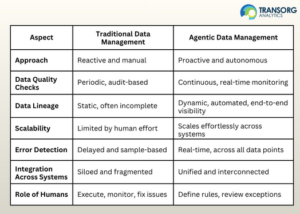

Why Traditional Data Management Cannot Scale These Issues

There are certain limitations of traditional data management compared to agentic data management for enterprises:

- Traditional data governance runs on cycles such as policies, reviews, and exceptions. That model was built for a slower world. At enterprise scale in 2026, data volumes, architecture complexity, and the cost of governance gaps have all outpaced it. For example: A mid-sized enterprise with 50 data sources, each updating daily, generates thousands of distinct data events that require validation. A team of 5-7 members cannot meaningfully oversee that volume. They can only respond to escalations, which means governance becomes reactive by default.

- Rules are written by people who understood the system at one point in time. When business logic shifts due to new markets, acquisitions, or regulatory changes, those rules lag behind. And while they lag, your data keeps moving under outdated constraints.

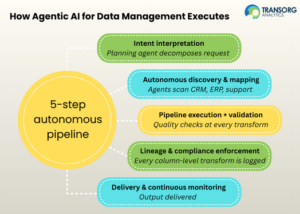

How Agentic AI for Data Management Works: A Closed-Loop System

In an agentic data management environment, this is what happens:

- Intent Interpretation – An AI planning agent reads your business request, identifies required data assets, reviews applicable governance policies, and implements necessary quality checks before trusting the output. The system interprets your intent and carries out these actions.

- Autonomous Discovery and Mapping – Building on the intent, specialized AI agents now scan your systems automatically, finding where data lives, identifying how fields relate across platforms, and reconciling mismatches before a single record is moved. If your CRM calls it “Account Name” and your ERP calls it “Client ID”, the agent figures that out.

- Pipeline Execution and Validation – In agentic data management workflow, data doesn’t just flow; at every step, it’s checked for fill-rate drops, null anomalies, and schema mismatches. If records fail, they’re flagged and routed for review. If they pass, they move forward.

- Lineage and Compliance Enforcement – The data ingestion agent logs every column-level transform and records changes to every field automatically. The consent validation agent validates consent restrictions. The compliance agent raises compliance flags before data reaches a downstream consumer.

- Delivery and Continuous Monitoring – The output reaches the analytics consumer. But the agents don’t stop. When next month’s data arrives, the same checks run automatically. The view stays current. No one files a new request or reruns the pipeline manually. The system maintains itself.

Agentic AI for Data Management: The Shift from Reactive to Proactive Data Operations

One of the most significant operational changes that agentic data management process introduces is the shift in when problems are detected.

In this context, the major differences between traditional and agentic data management are:

The Business Impact Of Agentic AI for Data Management

- Data engineering teams stop spending the majority of their time on incident response.

- Freed capacity shifts to higher-value work such as better analytical frameworks, data models, and sharper business questions.

- Reactive debugging decreases substantially.

- Agentic data management applies governance at the point of data movement. The compliance posture changes from retrospective documentation to continuous enforcement.

- Agentic AI for CEOs and business stakeholders such as heads of marketing, sales, finance, and operations allows access to data they can trust without understanding the technical process that produced it.

The Practical Starting Point for Enterprises

The practical path for enterprises to deploy agentic AI for data management is to identify the areas where reactive governance is causing the most measurable business cost, such as:

- the pipelines that fail most frequently

- the datasets that produce the most downstream complaints

- the compliance processes that consume the most manual effort

Key Takeaways

- Data quality issues originate across three layers – at the point of capture, pipeline, and architecture, and must be addressed at all three simultaneously.

- Agentic data management applies continuous, autonomous oversight to the full data life cycle without requiring human intervention at each step.

- Continuous governance through agentic AI is the foundation for a reliable customer 360, real-time analytics, and audit-ready compliance.

- The shift from reactive to proactive data operations reduces the cost of data failures and frees data teams to focus on higher-value work.

If your teams are spending more time managing data than using it, connect with us today to build agentic data management capabilities through TransOrgIQ, an autonomous data management platform built for complex, multi-source enterprise environments.

FAQs

1. What are the 4 pillars of data governance and management?

The four pillars of data governance are data quality, data ownership, data protection and compliance, and data lifecycle management. Together, they ensure data is accurate, secure, and used responsibly across its lifecycle.

2. How does Agentic AI improve data quality?

Agentic AI enhances data quality by continuously monitoring datasets, identifying anomalies, and automatically correcting inconsistencies. It uses machine learning to learn patterns over time, ensuring cleaner, more reliable data without relying heavily on manual validation processes.

3. What are the benefits of agentic data management for enterprises?

Agentic AI helps enterprises improve efficiency, reduce operational costs, enhance data accuracy, and enable real-time decision-making. It also supports scalability by managing complex data environments autonomously.

4. Can Agentic AI simplify data migration processes?

Yes, Agentic AI can significantly simplify data migration by automating data mapping, validation, and error resolution. It can identify inconsistencies across systems and adapt migration workflows dynamically, reducing downtime and ensuring smoother transitions.

5. What challenges should organizations consider when adopting agentic data management?

Organizations should consider challenges such as integration with legacy systems, ensuring data security, maintaining transparency in AI decisions, and managing change within teams. A well-defined strategy and governance framework can help overcome these barriers.

6. What are the key use cases of Agentic AI for data management?

The common use cases include automated data quality management, intelligent data migration, real-time anomaly detection, data governance enforcement, and managed data pipelines. These capabilities help reduce operational overhead and improve data reliability.